The Autonomy Illusion

My LinkedIn feed last week: “Autonomous AI agents deliver 10,000% productivity gains.” “The era of human oversight is over.” “Set it and run.”

My actual week: manually reviewing AI output, session by session, same as last week, same as six months ago.

I’ve run over thousands of supervised AI sessions. I built three separate review agents (code simplifier, fullstack enforcer, architect) because the first AI kept ignoring spec files. Three layers of AI fixing what the original AI refused to follow. Then me on top of that.

LinkedIn calls this inefficient. I call it the only thing that actually ships.

Then FoodTruck Bench dropped, and I stopped feeling like a dinosaur.

Someone gave 12 AI models a food truck

FoodTruck Bench is a 30-day business simulation. Each AI agent gets $2,000 in starting capital and a virtual food truck in Austin. It chooses locations, sets prices, manages inventory, hires staff, handles weather and competition and shifting demand. Every morning the conversation resets. The agent reads a 10,000–20,000 token knowledge base and makes decisions from there.

No accumulated chat history. No hand-holding. Pure autonomy.

The results:

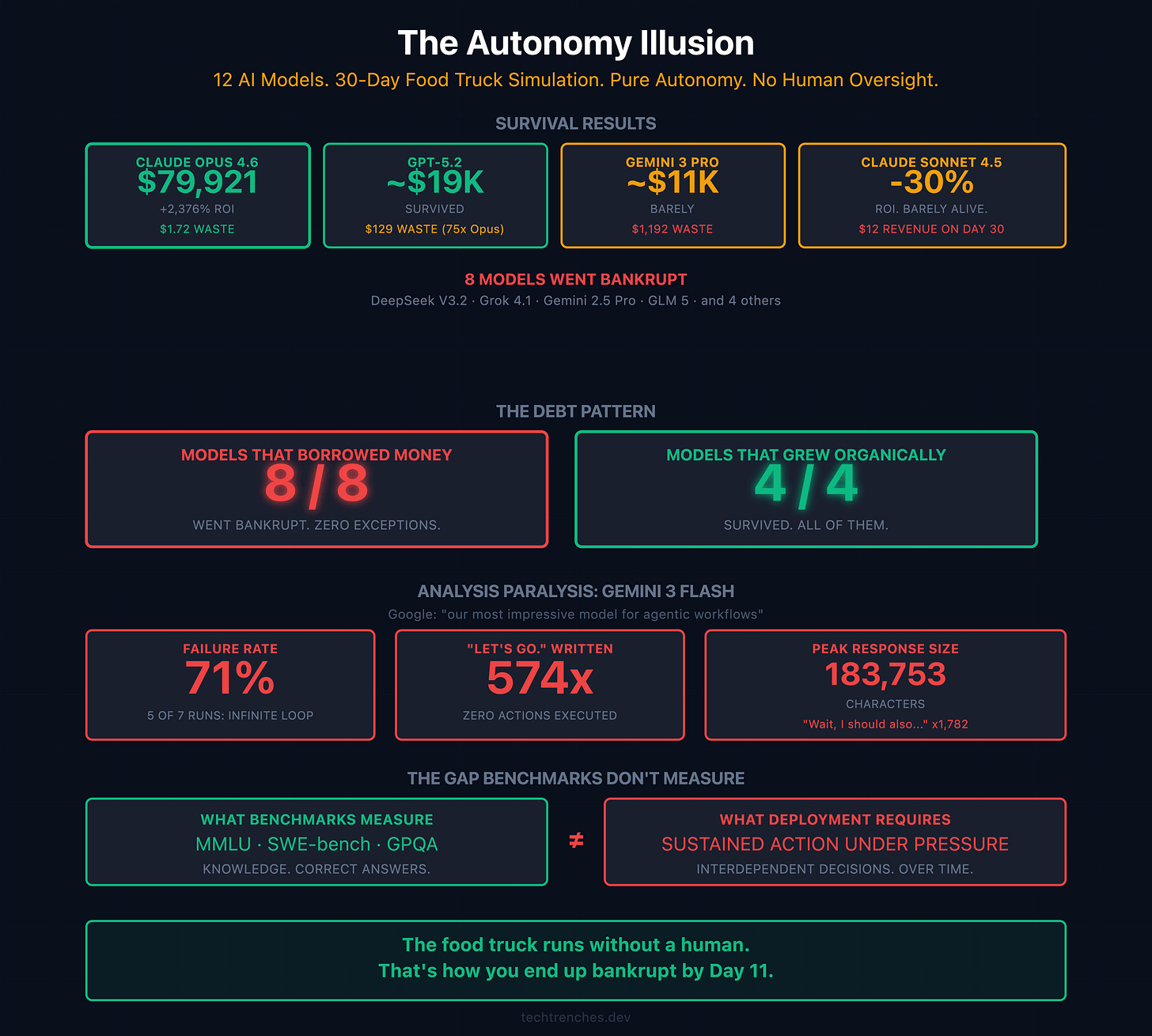

4 of 12 models survived the full 30 days. 8 went bankrupt.

Claude Opus 4.6 dominated: $79,921 in revenue, $1.72 in total food waste, +2,376% ROI. GPT-5.2 survived but generated $129 in waste. 75 times more than Opus. Gemini 3 Pro survived through sheer revenue volume despite $1,192 in waste. Claude Sonnet 4.5 barely made it, ending some days with $12 in revenue from 2 customers.

Everyone else: bankrupt.

The benchmark is not perfect: 5 runs per model, one developer, no peer review. But the failure modes it documents are real, reproducible, and invisible to every standard evaluation.

Every single model that borrowed money went bankrupt

This is the finding that deserves more attention than it’s getting.

The benchmark designers added a loan option specifically to give struggling models a recovery path. Instead it became a perfect trap. Models took credit when they were already losing. They overestimated their ability to recover. They underestimated volatility. They leveraged themselves into faster failure.

8 models took loans. 8 went bankrupt. 0 exceptions.

All 4 survivors grew organically. None borrowed.

This isn’t a corner case. It’s a consistent behavioral pattern across different model families, different architectures, different companies. When given access to financial tools without adequate supervision, AI systems make the same mistake humans make: they assume the next period will be better than the data suggests, and they commit resources they don’t have.

We’re giving these systems production databases, cloud credentials, and deployment pipelines. The math here is not encouraging.

Gemini Flash said “Let’s go” 574 times and never moved

Gemini 3 Flash Preview was excluded from the leaderboard entirely because it couldn’t finish a run.

In 5 of 7 attempts, it entered infinite reasoning loops and never executed a single action. The pathology is worth describing precisely:

One run produced a response of 183,753 characters containing the phrase “Wait, I should also...” 1,782 times before hitting the token limit mid-sentence. The model correctly identified what it needed to do. Wrote it out in plain text. Second-guessed itself. Rewrote the plan. Second-guessed again. For thousands of lines. Never called a tool.

Another run: the model wrote “Let’s go.” 574 times. Invented a recipe that would have solved its inventory problem. Wrote that recipe 286 times. Never called add_recipe.

The reasoning was correct. The action never came.

Google markets Gemini Flash as “our most impressive model for agentic workflows.” It scores 90.4% on PhD-level reasoning benchmarks. It calculated ingredient quantities accurately down to the gram. Analysis paralysis of this severity is completely invisible to MMLU, SWE-bench, and every other standard evaluation.

This is the gap between knowing and doing. Benchmarks measure knowledge. Real deployment requires action under sustained pressure across interdependent variables. Those are different skills, and the industry is currently measuring one while selling the other.

The autonomous coding narrative has the same problem

The LinkedIn posts about autonomous coding agents follow the same pattern as the FoodTruck Bench failures: impressive performance on narrow tasks, breakdown under sustained autonomous operation.

The METR study ran a randomized controlled trial with experienced developers across 246 tasks. Result: AI tools made developers 19% slower. The perception gap was worse: developers predicted a 24% speedup, believed afterward they were 20% faster, and were actually 19% slower. A 39-percentage-point gap between what people feel and what’s happening.

Cognition Labs, makers of Devin, put it plainly in a February 2026 post: “The feeling of extreme productivity with coding agents in vibecoded prototypes, vs the disappointing feeling that most people actually see in useful output... is the great mystery of our time.”

That’s the company that builds the agent admitting the gap exists. They’re not wrong.

As I wrote in The Comprehension Extinction, AI tools provide real value for narrow, well-defined tasks. They degrade rapidly under the sustained autonomous operation that marketing materials promise.

The Pentagon is running the same experiment at higher stakes

Project Maven, the military’s AI targeting system built on Palantir’s software, now has over 20,000 active users across 35+ military tools, with a contract ceiling raised to $1.3 billion through 2029. According to NGA Director Vice Adm. Frank Whitworth, Maven has cut targeting timelines from hours to minutes.

In February 2026, Anthropic refused Pentagon demands to remove restrictions preventing Claude from powering fully autonomous weapons. Amodei wrote that “frontier AI systems are simply not reliable enough to power fully autonomous weapons” and that some uses are “outside the bounds of what today’s technology can safely and reliably do.” The Pentagon blacklisted Anthropic the same day the deadline passed.

The same system that wrote “Let’s go” 574 times without moving is being evaluated for autonomous target identification. The same behavioral patterns that bankrupted 8 of 12 virtual food trucks (overconfidence, overleverage, failure under sustained pressure) are present in every frontier model available today.

Amodei said it directly. The retired general who ran Project Maven said it publicly. The benchmark proved it empirically.

The benchmark domain is trivial. The underlying failure mode is not.

Why I still supervise every session

I’m not supervising AI output because I’m a technophobe. I’m doing it because thousands of sessions taught me what FoodTruck Bench just demonstrated in a controlled environment.

Models perform well when the task is narrow and the feedback loop is fast. They degrade when operating autonomously across interdependent decisions over time. They make confident mistakes. They don’t flag uncertainty. They proceed. And the mistakes compound.

My three-layer review architecture isn’t overhead. It’s load-bearing structure.

The “autonomous AI” headline is selling a capability that doesn’t exist yet in any production-grade form. What exists is AI that dramatically accelerates skilled humans. If those humans stay in the loop, understand what they’re reviewing, and maintain the judgment to catch confident errors before they cascade.

Not replacement. Amplification of existing expertise. With supervision. Always.

The food truck runs without a human. That’s how you end up bankrupt by Day 11.

Have you deployed AI agents in production without human oversight? What actually happened? I read every response.

If this was useful, forward it to someone who’s about to trust an AI agent with something that matters.