The Snake That Ate Itself: What Claude Code’s Source Revealed About AI Engineering Culture

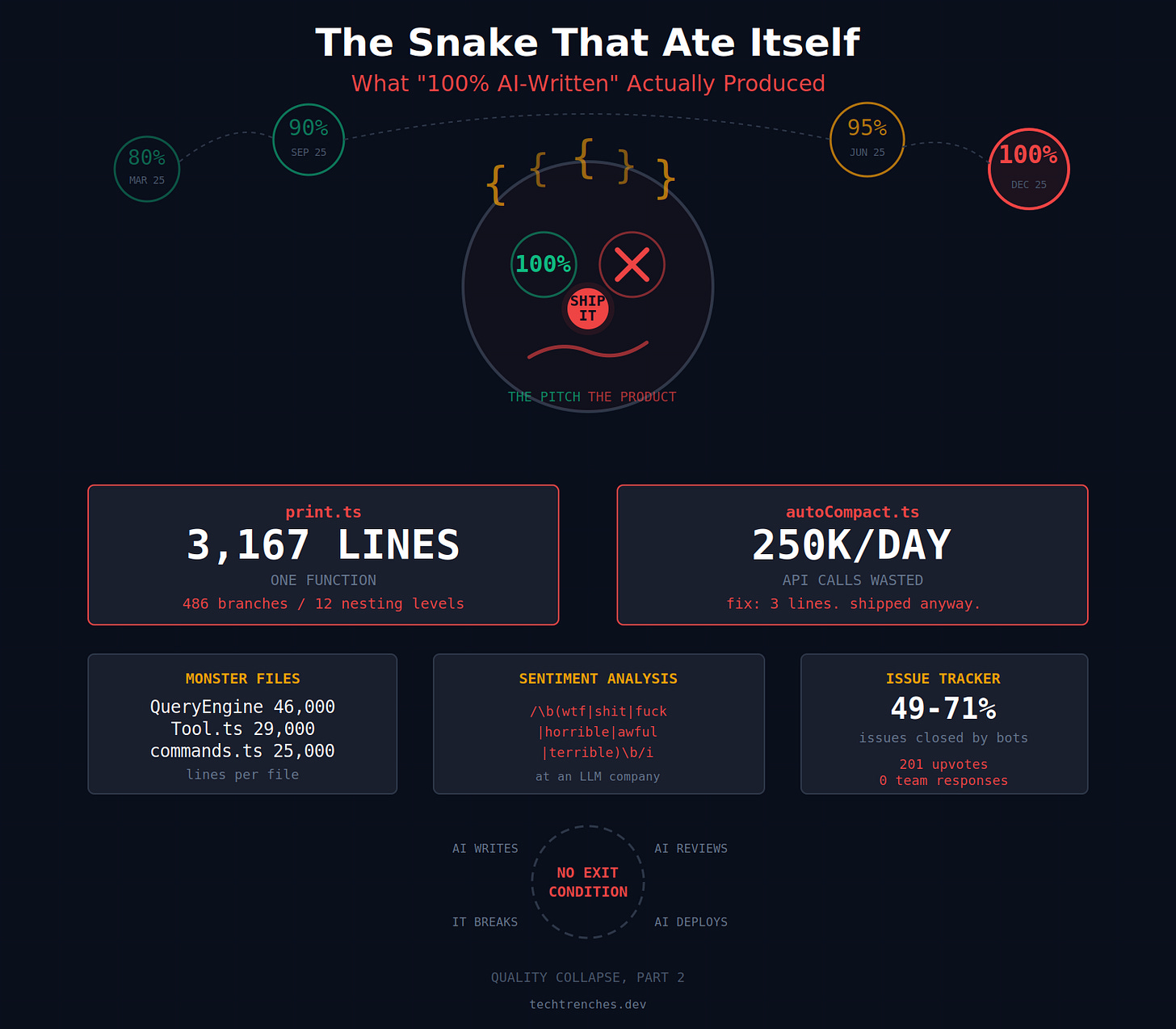

On December 27, 2025, Anthropic’s lead engineer Boris Cherny posted on X: “In the last thirty days, 100% of my contributions to Claude Code were written by Claude Code.” 259 pull requests. 497 commits. 40,000 lines added. 1.3 million views. The tech world applauded.

Three months later, a packaging mistake exposed 512,000 lines of that code to the public. Leaks happen. Companies recover. The leak isn’t the story.

The code is the story.

64,464 lines of core TypeScript serving paying customers. A single function spanning 3,167 lines. Regex for sentiment analysis at a company that builds the world’s most advanced language model. A known bug burning 250,000 API calls daily, documented in a comment and shipped anyway.

Anthropic responded to the leak. Packaging error. Human mistake. No one fired. They never responded to the code. Because the leak was an accident. The code was a choice.

The Auction Nobody Won

To understand what happened, you need to watch the numbers climb.

March 2025. CEO Dario Amodei at the Council on Foreign Relations: “We’re 3 to 6 months from a world where AI is writing 90% of the code.”

May 2025. Boris Cherny on the Latent Space podcast: “Maybe 80-90% Claude-written code overall.”

September 2025. Amodei again, hedging now: “70, 80, 90% of the code written at Anthropic is written by Claude.” Notice the range. 70 is not 90. But journalists ran with 90.

October 2025. Amodei at Dreamforce with Marc Benioff: “I made this prediction that in six months, 90% of code would be written by AI models. That is absolutely true now.” When Benioff pressed, Amodei walked it back: “Not uniformly.”

December 2025. Cherny’s tweet. 100%.

February 2026. CPO Mike Krieger at Cisco AI Summit: “Right now for most products at Anthropic, it’s effectively 100%.”

March 7, 2026. Cherny confirmed again: “Claude Code is 100% written by Claude Code.”

March 31, 2026. The source map leaked.

Every two to three months, the number went up like a bidding war where the bidder is also the auctioneer. A LessWrong analysis later called these claims “misleading/hype-y,” noting the metrics were never defined. Is it 90% of lines committed? 90% of engineering effort? 90% of characters typed? The distinction matters enormously. Anthropic never clarified. The ambiguity was the point.

What 100% Looks Like in Practice

So the number reached 100%. Then the source leaked. And for the first time, anyone could see what 100% actually produced.

A file called print.ts contained a single function spanning 3,167 lines with 486 branch points and 12 levels of nesting. One HN commenter catalogued what lived inside that function: the agent run loop, SIGINT handling, rate limiting, AWS authentication, MCP lifecycle management, plugin loading, team-lead polling via a while(true) loop, model switching, and turn interruption recovery. His verdict: this should be 8 to 10 separate modules. Nobody disagreed.

QueryEngine.ts ran 46,000 lines. Tool.ts hit 29,000. commands.ts reached 25,000. The entry point main.tsx was 785 KB.

But the detail that spread fastest was the regex. In userPromptKeywords.ts, the company with the world’s most advanced language model was detecting user frustration with: /\b(wtf|shit|fuck|horrible|awful|terrible)\b/i

Pattern matching for sentiment analysis. At an LLM company. One HN commenter delivered the line everyone quoted: that’s like a trucking company using horses to haul parts. Defenders argued regex is faster and cheaper than an inference call. They’re right. But that’s the engineering culture talking. Cheap beats correct. Fast beats good. Ship it.

What This Code Does in Production

Bad structure is one thing. You can argue it's style. But the leaked source also showed what happens when code like this runs at scale.

The leaked source contained a comment in autoCompact.ts that became a symbol: “1,279 sessions had 50+ consecutive failures (up to 3,272) in a single session, wasting ~250K API calls/day globally.”

The fix was three lines of code. Set a maximum failure threshold, then disable compaction for the session. Three lines to stop burning a quarter million API calls daily. Someone knew about the problem. Someone wrote the comment documenting it. Then they shipped it anyway.

Memory consumption told a similar story. Community benchmarks showed 7 Claude Code processes consuming 5.3 GB of RAM. GitHub issues documented worse: one process allocating 36.5 GB peak on an 18 GB machine. Another reaching 93 GB heap allocation within five minutes.

And the issue tracker itself was automated into silence. A Claude Sonnet-powered deduplication bot processed every new issue. A sweep bot marked issues stale after 14 days and closed them 14 days later. A lock bot prevented comments on closed issues after 7 days. An analysis estimated that 49 to 71% of all 26,792 issue closures were bot-driven. Issue #38335 had 201 upvotes and zero team responses. Labeled “invalid.”

“Go Faster, Not More Process”

Documented bugs. Wasted API calls. Users filing issues that bots close. All of this was visible before the leak. The leak just confirmed it was a choice, not an oversight. And when the leak happened, the response confirmed the choice was deliberate.

Cherny acknowledged the human error: “Our deploy process has a few manual steps, and we didn’t do one of the steps correctly.” Then he added: “Like with any other incident, the counter-intuitive answer is to solve the problem by finding ways to go faster, rather than introducing more process. In this case more automation & claude checking the results.”

This isn’t one person’s opinion. It’s the team philosophy. As one commenter in the HN thread explained: “The claude code team ethos is that there is no point in code-reviewing ai-generated code. Simply update your spec and regenerate.”

Read that again. The response to leaking code with a 3,167-line function, a regex for sentiment analysis, and bugs that basic integration tests would catch is not to add tests. Not to add code review. Not to add process. It’s to go faster. Regenerate. And have Claude check Claude’s work.

This is the ouroboros. The snake eating its own tail. AI writes the code. AI reviews the code. AI checks the deployment. When it breaks, the answer is more AI. The loop has no exit condition.

As I wrote in Quality Collapse, we’ve normalized catastrophe in software engineering. That piece tracked an industry-wide pattern: ship broken, fix later, throw hardware at the problem. Claude Code is no longer an example of the pattern. It’s the specimen.

Where Does This Philosophy Stop?

If “don’t review, regenerate” is how they build the product, it raises an obvious question: what about the code you can’t see?

Engineering culture doesn’t have a switch. The team that ships print.ts with 12 levels of nesting doesn’t suddenly become disciplined when writing model training code. Same people. Same processes. Same code reviews, or lack of them.

They justified the leak. They explained the packaging error. They didn’t justify the code. That silence tells you everything. The quality is fine by them. This is how they build things. On purpose.

There are indirect signals that the rot goes deeper. Eight service outages in a single month. A source map leak that happened twice (the first was quietly patched in early 2025). An Axios dependency that was compromised by a supply chain attack on the same day as the leak. 74 npm dependencies for what is essentially a CLI wrapper around an API.

And here’s the pattern that makes it sustainable, temporarily: when you have billions in revenue and functionally unlimited compute, you feed technical debt with resources instead of fixing it. The function is 3,167 lines? Don’t refactor, add more RAM. The autoCompact bug burns 250,000 API calls? The margin absorbs it. The model regresses? Throw more GPU hours at training.

This works while money flows. Anthropic is a startup that scaled faster than it could build engineering practices. The recursive loop of AI-writes-AI-checks-AI-fixes masks the absence of fundamentals. But compute gets expensive. Revenue cycles turn. And technical debt that was papered over with resources becomes a debt trap with no exit.

The Uncomfortable Truth

The company that sells AI coding tools cannot build a quality product with its own AI coding tools. The percentages were always the pitch, not the product. 80. 90. 95. 100. Nobody asked what 100% actually produces until the source code answered for them.

AI amplifies whatever is already there. Good discipline becomes great output. No discipline becomes technical debt at machine speed. Anthropic chose a direction. Go faster. Have Claude check Claude. And when it breaks, go faster still.

If this is the new quality standard from the company pulling our industry forward, then I’m not sure I want to go where the industry is going.

My grandfather was an electrical engineer. He told me: do it well, or don’t do it at all. Simple rule. It guided how I built teams, how I shipped software, how I evaluated every project for 13 years. Quality wasn’t a feature. It was the floor.

That floor is gone. Quality is a relic now. Nobody wants it. Nobody pays for it. Nobody measures it. The metric is velocity. The metric is percentage of code generated. The metric is how fast you can ship a 3,167-line function that burns a quarter million API calls daily and call it 100% AI-written.

I’m seriously considering a pivot to security. Leaks, supply chain attacks, and production code that reads like a rough draft are the new normal. Someone will need to clean up after the vibe coders. That’s a growth industry.

Or maybe I’ll become an electrician. My grandfather’s trade. At least when you wire a panel correctly, it stays correct. No one ships a hot fix that reverses your ground fault protection. No bot auto-closes your inspection report after 60 days.

One thing I know for certain: I don’t want to move in the direction this industry is heading. And if a 3,167-line function with 486 branch points is what “100% AI-written” looks like at the company building the future, the future needs better engineering. Not faster engineering. Better.

I was a huge fan of Anthropic. Was.

I’ve been in the software industry for 21 years and worked on several software startups. In my experience, the attitude and the culture that this article speaks about aren’t new.

All CEOs, all CTOs, and most project managers I’ve worked with have had this attitude of disregarding technical debt and shipping lower-quality code. It’s no surprise to me that that culture exists within Anthropic and I’m reasonably certain that it’s no different in any other AI company.

What makes any of this new, and much worse for the industry as a whole, and for customers, is that now that culture is automated.

What used to be the occasional “garbage in, garbage out” is now going to be a frequent “toilet flush in, toilet flush out”.

I am a non-professional hobby-coder. For years, I have been trying to follow all the advice for good and clean code. This caused me a lot of headaches and increased complexity. And now I have to learn here that neither the pros, nor the coding agents care about that.

This article also shattered my illusion that Claude Code is the professional harness. I use opencode and pi. But I had doubts If they are inferior to the polished product from Anthropic, coming from people with insane salaries.

But it also reinforces my suspicion that that the only thing that Americans do really well is story-telling. Almost every American product turns out to be over-hyped crap.