Your Brain on Autopilot: The Cost of AI Thinking for You

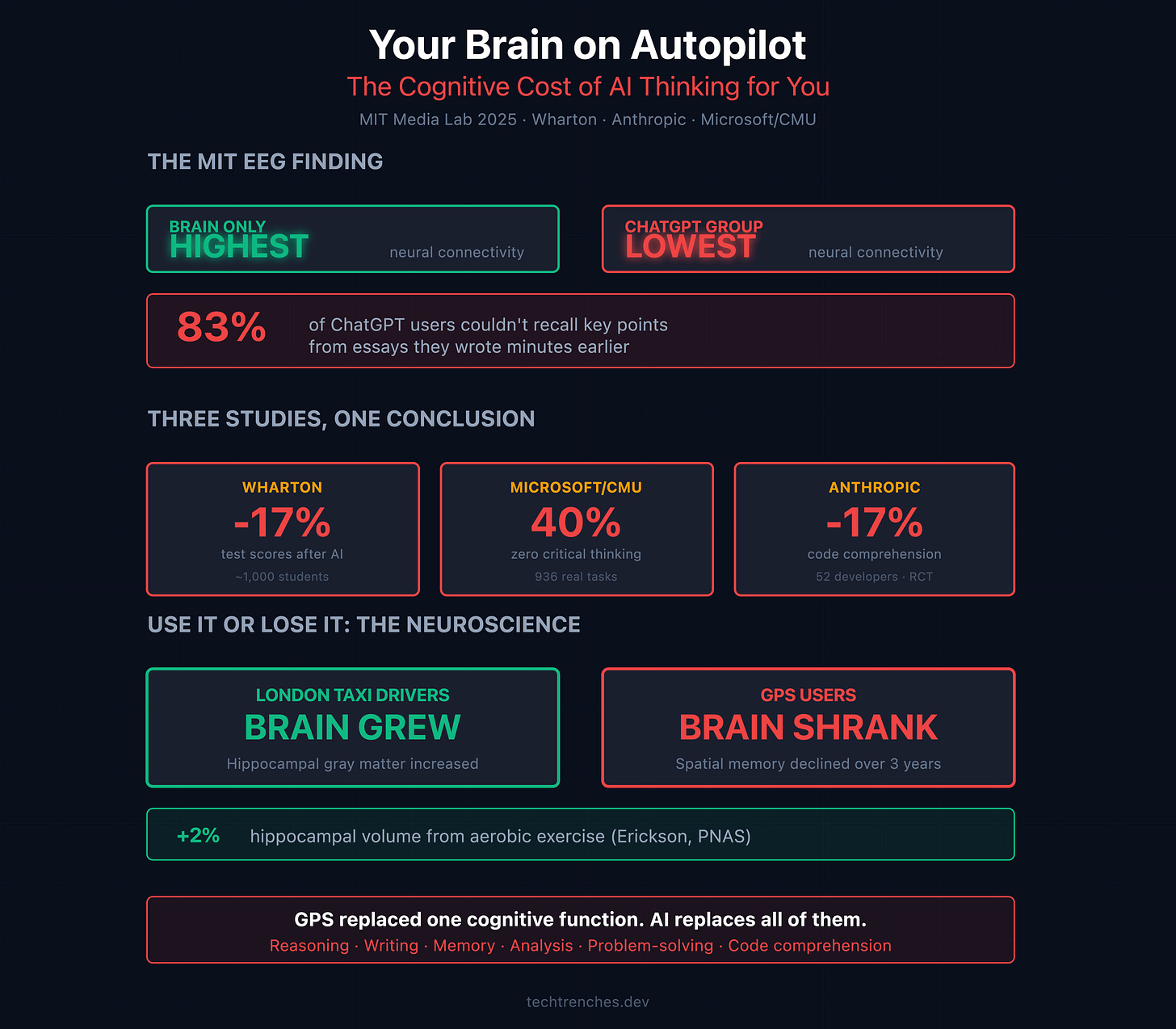

Eighty-three percent of ChatGPT users couldn’t recall key points from essays they had written minutes earlier.

Not essays they read. Essays they wrote. With their own names on them.

MIT Media Lab published this finding in 2025. Researchers strapped EEG sensors on 54 people. Tracked them across four writing sessions over four months. Three groups: ChatGPT, Google, and brain-only.

The ChatGPT group showed the weakest neural connectivity across every frequency band measured. Alpha, beta, theta, delta. The more AI assistance people had, the less their brains engaged. By the third session, most had devolved into pasting prompts and copying outputs. Two English teachers called the AI-assisted work “soulless.” Nearly identical across participants.

Then the researchers swapped the groups. ChatGPT users who switched to writing without AI showed reduced brain activation compared to people who had been writing independently all along. Four months was enough for their brains to adapt to not thinking.

Meanwhile, brain-only writers who gained ChatGPT access showed increased connectivity. They used AI as an amplifier, not a crutch. Because they had built the cognitive foundation first.

The researchers called it “cognitive debt.” I have a simpler term: brain atrophy.

The Research Keeps Saying the Same Thing

The MIT study isn’t an outlier. Every major study from 2024-2026 finds the same pattern: AI makes you faster while making you dumber.

Microsoft and CMU surveyed 319 knowledge workers across 936 real tasks. For 40% of those tasks, workers reported using zero critical thinking.

The Wharton School ran a field experiment with roughly 1,000 high school students in Turkey. Students with ChatGPT access solved 48% more practice problems. Then they took a test without AI. They scored 17% worse than the control group.

Anthropic tested 52 junior developers in January 2026. The AI group scored 17% lower on code comprehension afterward. The biggest gap? Debugging questions. Developers who delegated entirely scored below 40% on comprehension. Those who asked the AI conceptual follow-up questions scored above 65%. Same tool. Different approach. Completely different outcomes.

I Watch This Every Day

I have been running an engineering department for years. I review code daily. I interview candidates constantly. I recently wrote about comprehension extinction in the engineering industry. But beyond the macro trends, there’s a micro picture: what’s happening inside individual brains.

One candidate embedded a prompt injection in his CV, instructing AI screening tools to score him as highly as possible. Another, six years of experience, couldn’t name boolean as a JavaScript data type. A third called Promises a “deprecated technology.” A fourth said “assassin-cross code” when he meant “asynchronous.”

These aren’t stupid people. But here’s what scares me more than the wrong answers: they’re not curious. They don’t care how the things they use every day actually work. They’re not engineers anymore. They’re operators. They plug frameworks together, wrap everything in abstractions, and ship features without understanding a single layer beneath the surface.

You can’t grow if you don’t know the basics. The framework handled it. The abstraction hid it. Copilot wrote it. They do the same repetitive work every day and call it “five years of experience.” Not because AI forced them to stop thinking. Because they were never interested in thinking in the first place.

Your Brain Is a Muscle. This Is Proven.

“Use it or lose it” isn’t a motivational poster. It’s measurable neuroscience.

Eleanor Maguire at University College London spent years studying taxi drivers. To get licensed, these drivers memorize 25,000 streets and thousands of landmarks over 3-4 years. Maguire tracked 79 trainees and 31 controls. At baseline, zero structural brain differences. After qualifying, every successful trainee showed measurable growth in posterior hippocampal gray matter. Their brains physically grew. Retired drivers showed their hippocampi shrinking back toward normal.

GPS tells the same story. McGill University tracked 50 drivers over three years: greater GPS use correlated with worse spatial memory, and heavy users didn’t start with a poor sense of direction. GPS caused the decline. An fMRI study confirmed it: during manual navigation, the hippocampus and prefrontal cortex lit up. During GPS-guided navigation, these regions showed zero additional activation.

GPS replaced one cognitive function. AI touches reasoning, writing, memory, analysis, problem-solving, and code comprehension simultaneously. All at once. Every day.

We Were Already Weakened Before AI Arrived

AI didn’t arrive into healthy brains. Americans read 12.6 books per year in 2021, the lowest Gallup has ever recorded, down from 18.5 in 1999. NAEP reading scores for 13-year-olds hit their lowest in decades, with the worst students scoring below 1971 levels. A Ludwig Maximilian University study found that after TikTok exposure, prospective memory accuracy dropped to near random guessing.

We stopped reading books, trained ourselves on 30-second content, destroyed our attention spans, and then handed our remaining cognitive functions to AI. We outsourced the last working part of the engine.

The Counterargument (And Its Conditions)

A Harvard RCT in 2025 found that a custom-designed AI tutor roughly doubled learning gains in physics. But that tutor gave hints, not answers. The Wharton study tested this exact distinction: a pedagogically designed “GPT Tutor” that guided instead of solving avoided all learning harm. Standard ChatGPT caused the 17% decline.

The MIT crossover data says it clearly: build cognitive capacity first, then add AI, and thinking improves. Start with AI, skip the cognitive development, and you may permanently close that door. The sequence determines the outcome.

What to Do About It

I’m not going to tell you to stop using AI. I use it every day. My team uses it on every project. But I also do things that force my brain to work without shortcuts.

Move your body. I snowboard and ride a OneWheel. Active sports force real-time spatial processing and split-second decisions that no screen can simulate. Erickson et al. published in PNAS that aerobic exercise increased hippocampal volume by 2%, while sedentary controls lost 1.4% per year. Physical movement grows the same brain structures that cognitive offloading shrinks.

Read books. I bought an e-ink reader specifically to kill my own excuses. No notifications. No browser. Just text. It worked. I read several at once: one in my native language, one in English. If you can’t sit with a book for an hour without reaching for your phone, your attention muscle is already atrophied.

Learn something with no shortcut. I planned to start learning Spanish. Haven’t pulled it off yet. But the principle stands: pick a skill where AI can’t do the work for you.

Stop doomscrolling. I deleted TikTok and Instagram to stop rotting my brain on short-form content. I’ll be honest: I still waste hours on YouTube Shorts. The pull is real. But every hour of short-form video trains your brain to think in fragments.

Understand what AI writes. My CTO recently migrated an abandoned project from Node 14 and React 16 to current versions using Claude. He’s not a JavaScript developer. But he has decades of engineering expertise. He got the API ported in four hours. Then he posted: “Opus is fucking lazy. Instead of solving for long term, it tries changing eslint options, adds options to ignore things during build. I have to slap its hands all the time.”

He caught every shortcut because he has the judgment to know that suppressing a linter warning isn’t a fix. A junior would have accepted that output and shipped it. Without the foundation to supervise AI, you’re not using a tool. You’re being used by one.

London taxi drivers proved that cognitive exercise physically grows your brain. GPS users proved that outsourcing shrinks it. AI outsources everything at once.

This isn’t new. After the Roman Empire fell, the recipe for concrete was lost for over a thousand years. The Pantheon still stands after two millennia, but medieval Europe couldn’t figure out how it was built. The knowledge disappeared because nobody practiced it. That’s what “use it or lose it” looks like at civilization scale. Now imagine it happening to reasoning, writing, and problem-solving all at once, across an entire generation.

Which side of that equation are you on?

One more thing. I write a lot about AI’s limitations. People sometimes read that as hate. It’s not. AI is a tool. I use it every day. I build products with it. I make money with it.

But in every article I try to say the same thing: don’t forget what your head is for. AI is not evil. Using it without thinking is. This isn’t a hater’s manifesto. It’s a sober look at what’s happening to us while we celebrate productivity gains.

And if you’ve read this far through my ramblings, maybe I’m not doing this for nothing.

Subscribe for weekly insights from the trenches of engineering leadership. No theory, just practical systems that work.

I totally agree with this article. This is one of the biggest and most immediate problems we'll face with generative AI. The temptation to delegate everything is so strong that we will do it in any possible circumstance. At some point, we should really hope that hallucinations and non-determinism in these tools are definitely solved; otherwise, there won't be anyone prepared enough to manage the rising problems at the moment they are most needed.

Everybody is so sure that scaling will solve every current limitation, but I'm really not sure that this will happen in the context of Llama and their architecture.

Love this article and it resonates a lot with my personal experience. Thanks for sharing.