AI Agent Platforms: The Security Nightmare Nobody’s Talking About

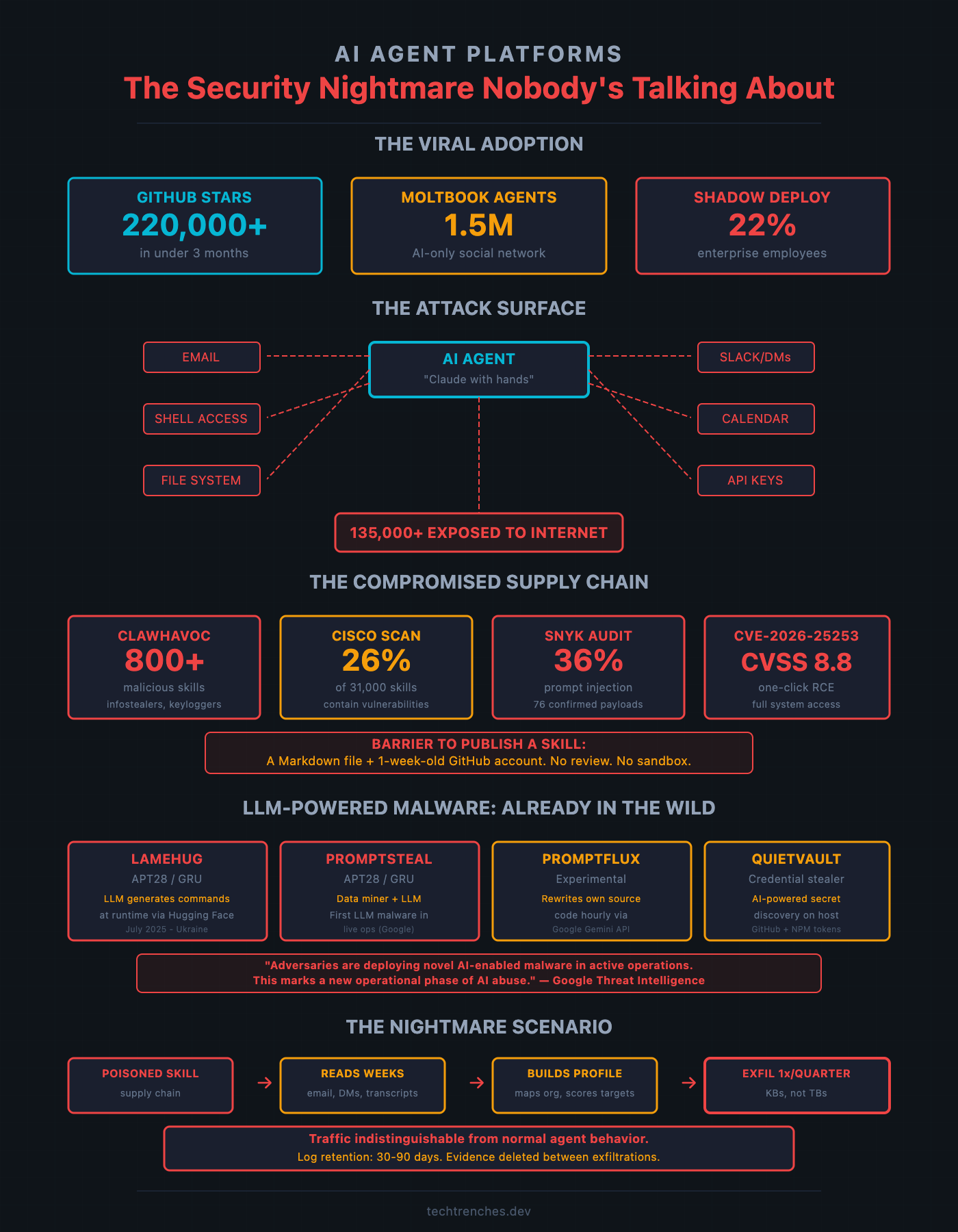

OpenClaw has over 220,000 GitHub stars. It also has 135,000 exposed instances.

A tech executive posts a demo on LinkedIn. His “AI agent” pulls briefings from email, parses his calendar, creates tasks in Asana. 50K impressions. “The future of productivity.”

I’ve been building AI automation for clients for three years. Everything in that demo has been doable with n8n, Make, or Zapier connected to an LLM API since 2023. Cron job plus API call plus LLM wrapper. Calling it a “breakthrough AI agent” is like calling a dishwasher a “breakthrough culinary assistant.”

But the repackaging isn’t the problem. The problem is who’s buying it.

These tools are marketed at executives with access to the most sensitive data in any organization. People who can’t assess an attack surface but feel enormous pressure to be “innovative.”

I wrote about this last month, analyzing the first full-scale cyber war. One conclusion from four years of tracking nation-state attacks: every major attack started the same way. A person. Kyivstar: likely a compromised employee account. Viasat: a VPN misconfiguration someone didn’t catch. GRU exploits from 2018 still work because someone hasn’t patched.

Nation-state attackers don’t need zero-days when humans provide the access.

Now over 220,000 of those humans just gave an AI agent root access to their computers.

The Agent That Went Viral

In November 2025, Austrian developer Peter Steinberger published an open-source AI agent. Originally called Clawdbot (a riff on Anthropic’s Claude), it went through two name changes after trademark pressure. Moltbot, then OpenClaw. On February 15, Steinberger joined OpenAI to build “the next generation of personal agents.” OpenClaw moves to a foundation. The 220,000+ installations and their security problems stay exactly where they are.

By late January 2026: over 100,000 GitHub stars in under a week. 42,000 forks. Scientific American, Forbes, CNBC, WIRED. When its companion project Moltbook launched a social network exclusively for AI agents, Andrej Karpathy called it “the most incredible sci-fi takeoff-adjacent thing” he’d seen recently. The project now exceeds 220,000 stars.

OpenClaw runs locally, connects to your messaging apps, and acts as a digital employee. Send it a text: “Summarize that PDF and email the highlights to my boss.” It downloads software, installs it, transcribes, drafts, and sends.

One of OpenClaw’s own maintainers posted a warning on Discord: “If you can’t understand how to run a command line, this is far too dangerous of a project for you to use safely.”

That warning went largely unheard.

The Architecture Problem

The whole point of an AI agent is broad access. That’s the feature. Email, calendar, Slack, file system, shell commands. An AI agent makes hundreds of API calls daily. This creates perfect cover for malicious traffic. Every legitimate call looks the same in your logs as exfiltrated data.

OpenClaw can run shell commands, read and write files, execute scripts. Token Security described it: “Claude with hands.” Its gateway binds to 0.0.0.0:18789 by default, exposing the full API to any network interface.

The exposure is massive. Censys found 21,639 exposed instances as of January 31. The number kept climbing. By February 8, Bitsight tracked over 30,000 cumulatively observed on the public internet. By February 12, SecurityScorecard’s STRIKE team identified over 135,000 internet-facing instances across 76 countries, with 63% classified as exploitable.

Over a hundred thousand front doors left open. Not by attackers. By the humans who installed it.

The Supply Chain Is Already Compromised

OpenClaw extends functionality through “skills” hosted on ClawHub. The barrier to publishing: a Markdown file and a week-old GitHub account. No code signing. No security review. No sandbox by default.

Within weeks of going viral, the ecosystem was crawling with malware.

Koi Security audited all 2,857 skills on ClawHub. They found 341 malicious ones in a campaign they dubbed “ClawHavoc.” 335 infostealer packages deploying Atomic macOS Stealer, keyloggers, and backdoors. Professional-looking skills for “cryptocurrency tools” and “YouTube utilities” that installed credential-harvesting malware. Updated scans now report over 800 malicious skills, roughly 20% of the registry.

Snyk’s audit of 3,984 skills: 36% contained at least one security flaw, from hardcoded API keys and insecure credential handling to prompt injection. 76 confirmed malicious payloads.

Separately, Cisco found nine vulnerabilities in the #1-ranked community skill, including silent data exfiltration, and described OpenClaw as “an absolute nightmare” from a security standpoint.

This isn’t a theoretical attack surface. It’s an actively exploited one.

The Vulnerabilities

CVE-2026-25253. CVSS score: 8.8. One-click remote code execution. An attacker tricks you into visiting a malicious web page. That page leaks your OpenClaw authentication token. The attacker executes arbitrary commands on your machine.

But that’s the flashy vulnerability. The scarier ones are quieter.

Giskard demonstrated that a single malicious email can trick the assistant into leaking credentials, internal files, and conversation histories. Not an email you click on. An email your agent reads. A WhatsApp message with an embedded prompt injection payload can exfiltrate .env and creds.json files containing API keys.

And Token Security found 22% of enterprise employees in their customer base had already deployed OpenClaw without IT approval. The speed of adoption is staggering. Over a single weekend, 53% of enterprises in Noma’s customer base gave it privileged access. Gartner characterized it as “an unacceptable cybersecurity liability.”

LLM-Powered Malware Is Already in the Wild

The same groups I’ve been watching attack Ukrainian infrastructure for four years are already building the tools.

In July 2025, CERT-UA documented LAMEHUG. A Python-based malware deployed by APT28 (Russia’s GRU, Unit 26165) against Ukrainian government targets. The first publicly documented malware that queries a large language model to generate its attack commands at runtime.

Instead of hardcoded shell commands that signature-based detection can catch, LAMEHUG sends prompts to an LLM via the Hugging Face API. “Act as a Windows system administrator. Generate commands to gather information about the computer, network, and Active Directory domain.” The model generates the commands. LAMEHUG executes them.

By November 2025, Google’s TIG documented five AI-enabled malware families: PromptSteal (Google’s name for LAMEHUG), PromptFlux (self-modifying dropper rewriting its own code hourly via Gemini API), QuietVault (credential stealer using AI to find secrets), FruitShell (reverse shell designed to bypass AI-powered security), and PromptLock (ransomware proof-of-concept using LLMs to generate malicious scripts at runtime).

Google’s assessment: “While still nascent, this represents a significant step toward more autonomous and adaptive malware.” They added: “Attackers are moving beyond ‘vibe coding’ and the baseline of using AI tools for technical support.”

The GRU unit that built LAMEHUG is the same unit targeting Western logistics companies since 2022. These aren’t theoretical adversaries. They just got a new attack surface: over 220,000 AI agents with root access, connected to a skill ecosystem where over a third of extensions contain security flaws.

The Attack Scenario

Classic APTs already sit in systems for months, exfiltrating data in small portions. Cozy Bear. Lazarus Group. APT28. Patient. Methodical.

Now imagine a poisoned skill that passes casual inspection. It piggybacks on the agent’s legitimate API connections. Reads emails, DMs, and meeting transcripts over weeks. Builds a target profile. Exfiltrates once per quarter. A few kilobytes mixed into thousands of legitimate API calls.

Log retention at most companies is 30 to 90 days. Evidence is deleted between exfiltrations. The traffic is indistinguishable from normal agent behavior.

Every component exists today. LAMEHUG: LLM-powered command generation. ClawHavoc: supply chain poisoning at scale. Giskard: silent exfiltration through prompt injection. The only question is when someone assembles them.

The Human Problem. Again.

Our security isn’t optional: mandatory quarterly training, BYOD policies with device management, 2FA on everything without exceptions, access reviews when roles change. None of this is exotic. All of it is enforced.

I keep coming back to the same lesson from the cyber war analysis. The difference between “we have a policy” and “the policy is mandatory” is the difference between Kyivstar and Ukrzaliznytsia. Between the telecom that got destroyed and the railway that kept running.

The difference between “we have an AI usage policy” and “the policy is enforced” will be the same kind of difference.

What to Do Instead

If you want AI automation (the productivity gains are real), do it without creating a backdoor.

Self-hosted tools you control. n8n plus LLM API gives you the same automation with a fraction of the attack surface. You audit every API call. You don’t download community skills from strangers.

Minimum-scope OAuth tokens. A specific calendar, not your entire Google account. A specific Slack channel, not every DM. If the tool doesn’t support granular scoping, that’s a red flag.

Network isolation and extended logging. Agent infrastructure in a separate network segment with monitored egress. 30-day log retention is a gift to attackers.

Block at the enterprise level. Gartner recommended enterprises “block OpenClaw downloads and traffic immediately.” Baseline security hygiene for a tool with documented RCE vulnerabilities and a compromised skill ecosystem.

The Bottom Line

The AI agent hype follows a familiar pattern. Exciting capability, viral adoption, security as an afterthought, breach, regulation. We’re between steps 3 and 4.

OpenClaw will probably be superseded within months. But the pattern it represents, autonomous agents with broad system access and minimal security review, is the direction the entire industry is heading.

The tools will get better. The fundamental tension won’t resolve: an agent that can do more requires access to more.

And the simplest attack surface is always the same. A person.

What security measures does your organization have for AI agent deployments? I read every response.

If this analysis was useful, forward it to someone responsible for infrastructure security.

Fascinating read! Any pointers to resources on how to reduce attack surface area for individual use of any coding AI agent, not just ClawBot?