AI’s Announcement Problem

Somebody decided not to buy it.

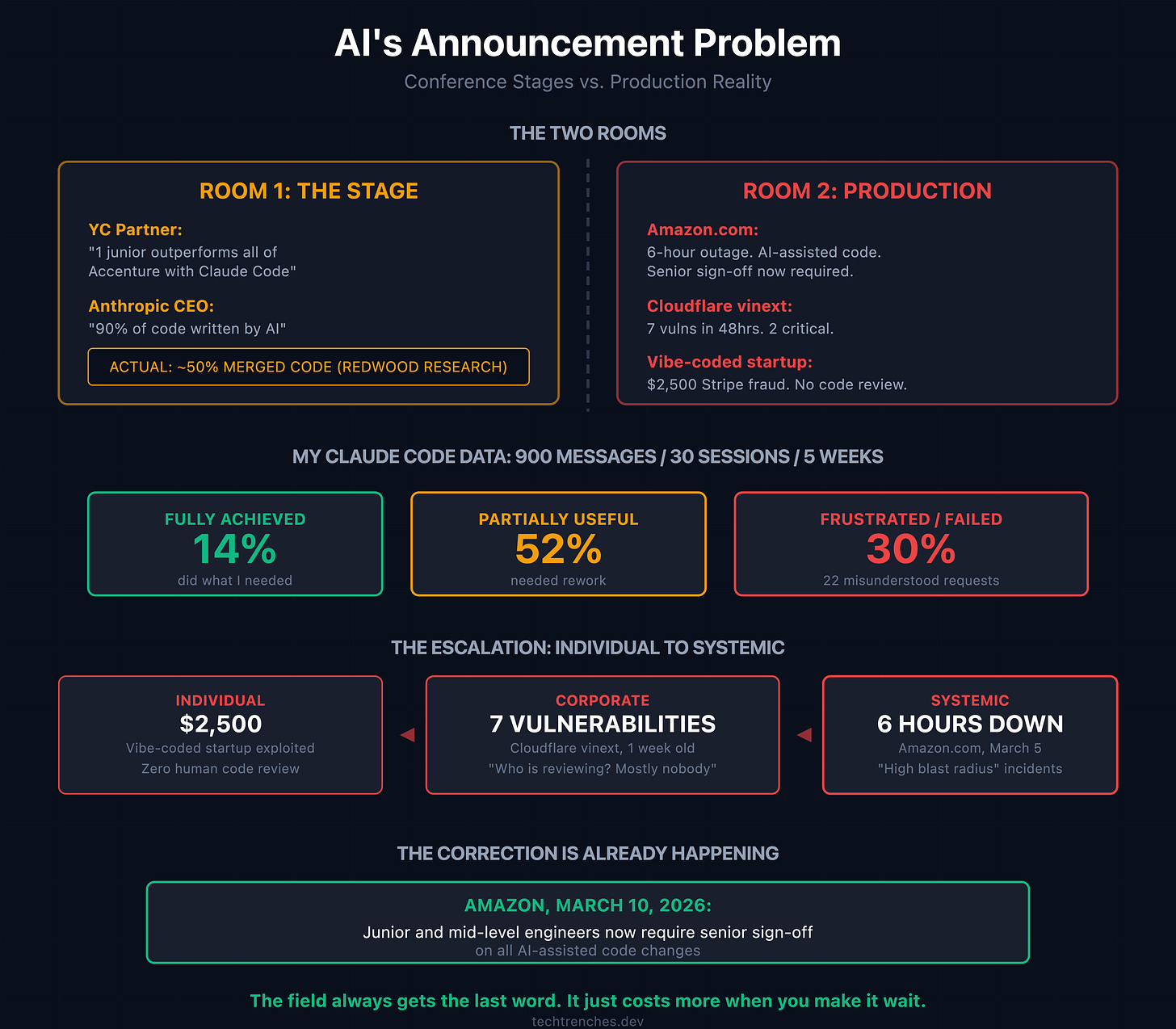

March 10, 2026. Amazon tells its engineers: junior and mid-level developers now require senior sign-off on all AI-assisted code changes.

Five days earlier, Amazon.com went down for six hours. Customers couldn’t check out. Couldn’t view prices. An internal briefing cited “high blast radius” incidents tied to “Gen-AI assisted changes” and “novel GenAI usage for which best practices and safeguards are not yet fully established.”

The company that pushed AI coding hardest just added friction to slow it down.

That’s not hype. That’s a correction. And it’s worth paying attention to, because the people announcing AI’s capabilities and the people dealing with its consequences are not in the same room.

The Claim

Tom Blomfield, YC Group Partner, tweeted in early February: “The entire Accenture workforce is about to be outperformed by a 24-year-old who learned Claude Code last Tuesday.”

When asked why Accenture specifically, he replied: “Because that would be a less punchy tweet.”

He knows the claim is wrong. He made it anyway because it performs well.

At the Council on Foreign Relations in March 2025, Dario Amodei said he thought AI would be writing 90% of code within three to six months. By September he claimed the prediction came true. A Redwood Research analysis of actual Anthropic data found the average was closer to 50% for merged code, with select teams at 90%.

The headline was “AI writes 90% of code.” The actual number was “some teams, for some tasks, sometimes.”

These are the voices that dominate the conversation. They don’t run production systems. They don’t sit in post-mortems. They announce.

Here is what the rest of us were dealing with.

My Numbers

I use Claude Code daily. I have the data from five weeks of tracked usage.

900 messages. 30 sessions. 14% fully achieved what I needed. 52% ended partially useful. 30% left me frustrated or dissatisfied. Across those sessions, 22 instances where the tool misunderstood requests: changed files I didn’t ask it to touch, guessed at APIs instead of reading the code, entered planning mode when I needed execution.

This is not a criticism of the tool. I keep using it because it’s faster for the right tasks. But it requires constant supervision, and the gap between what it does in a demo and what it does on a Tuesday afternoon when you need a specific database migration is enormous.

You can pull your own numbers. Type /insights in Claude Code. It analyzes your last 30 days of sessions and generates a report: where you spent time, where things broke down, what patterns keep repeating. I recommend doing this before forming an opinion about AI productivity. Your data will look nothing like the conference slides.

In late February, Alexey Grigorev, founder of DataTalks.Club, approved a Claude Code terraform destroy command. He wrote the post-mortem himself. He believed it would clean up duplicate infrastructure. It wiped everything: VPC, RDS database, ECS cluster, load balancers, bastion host. 2.5 years of student submissions from 100,000 students gone. The automated snapshots deleted alongside everything else.

AWS Business Support spent 24 hours finding a hidden internal snapshot. The data was recovered. Barely.

Grigorev took full responsibility. He was right to do so. The tool did exactly what it was told. That’s the point. When you use these tools in production, the failure modes are real. They cost money, time, and data. The conference stage never shows this part.

The Escalation

The incidents are scaling with adoption. Not just individual engineers losing data. The pattern is climbing from personal to corporate to systemic.

February 28, 2026. A founder named Anton Karbanovich posts on LinkedIn: “My vibe-coded startup was exploited. I lost $2,500 in Stripe fees. 175 customers were charged $500 each before I was able to rotate API keys.” His Stripe secret key was in frontend JavaScript. Even a junior developer doing code review catches that in two minutes. Nobody reviewed the AI-generated code at all.

Four days earlier. Cloudflare ships vinext: a full Next.js rewrite, one engineer, one week, Claude Code. Goes viral as proof of ~100x AI productivity gains. Buried in their own blog post: “vinext is experimental. It has not yet been battle-tested with any meaningful traffic at scale.” The GitHub README: “Who is reviewing this code? Mostly nobody.” Within 48 hours, Vercel found 7 security vulnerabilities: 2 critical, 2 high, 2 medium, 1 low. One was identical to a Next.js vulnerability reported and patched years earlier.

The ~100x claim is real for one specific case: rewriting well-tested existing software with clear requirements. That qualifier didn’t make it into the retweets.

Same week. An autonomous security agent broke into McKinsey’s AI platform Lilli. Two hours. No credentials. Full read and write access to the production database. 46.5 million chat messages about strategy, M&A, and client engagements. 728,000 confidential files. 57,000 user accounts. 384,000 AI assistants deployed for 58,000 employees. The system prompts were writable. One SQL injection could have poisoned every answer Lilli gave to 40,000 consultants. McKinsey patched within a day. But for two years, the world’s most expensive consulting firm ran its AI platform with 22 unauthenticated endpoints. I wrote about this exact pattern in AI Agent Security. Nobody listened then either.

Individual failure. Corporate failure. Systemic failure. Same root cause: AI-generated code moving faster than human judgment can follow.

I’ve Seen This Before

Not in tech.

August 18, 2025. Closed-door meeting at the White House. Zelensky showed up with a PowerPoint titled “Making US-Ukraine Drone Industry Great.” Ukrainian interceptor drones had been shooting down Shaheds at $1,000 to $2,500 per intercept. Four years of combat data. Cost per kill, failure rates under jamming, how Iranian designs adapted. He proposed building drone defense hubs across the Middle East.

Trump asked his team to work on it. They didn’t.

A US official explained why: “We figured it was Zelensky being Zelensky. Somebody decided not to buy it.”

Six months later, seven American service members were killed by Iranian drone attacks across nine countries. The White House scrambled to ask Ukraine for help. Three days later, Ukrainian teams were already in Jordan. Trump’s sons then announced a company to sell Ukrainian drone technology to the Pentagon.

The people with the most field data were dismissed. The people who dismissed them ended up paying for the knowledge they refused.

This is exactly what’s happening in AI right now. The engineers with years of production data on what these tools actually do are not the ones being quoted. They’re too busy adding senior sign-off requirements and recovering databases from hidden snapshots. The announcers don’t run terraform destroy on production. They don’t debug six-hour outages. They don’t lose sleep over Stripe keys in frontend JavaScript.

They announce. The rest of us clean up.

The Two Rooms

There are two conversations about AI right now. Conference stages and Twitter threads. Slack channels and incident retros. They don’t overlap.

I’ve been in the second room for years. Thousands of AI supervision sessions across my teams. The patterns are consistent. The tools help. They do not replace judgment, and they fail in ways that require deep system knowledge to detect.

The correction is already happening in the second room while the first keeps announcing.

The engineers who built judgment through years of production failures, late-night debugging, and system-level thinking are the ones writing the new guardrails. They’re the ones adding friction back into the process because they understand what happens without it.

Every time the field data was available and somebody decided not to buy it, the cost showed up later. Six-hour outages. $2,500 in fraudulent charges. 2.5 years of student data hanging by a single hidden snapshot.

The data was always there. The people who had it just weren’t loud enough.

The gap between announcement and consequence isn’t always measured in outages and Stripe fees. Claude is integrated into Palantir’s Maven, the Pentagon’s targeting software. The Washington Post reported it suggested hundreds of targets for the Iran strikes. An elementary school in Minab was hit on day one. Sometimes, room two isn’t a Slack channel. Sometimes it’s a coordinates list.