AI Is a Mirror of Our Engineering Culture

Most engineers in our industry are average or below average. That’s how averages work.

We trained the most powerful code-generation tools on their own output.

GitHub hosts over 518 million projects. The vast majority: personal, inactive, abandoned. Studies find that most repos are student projects, prototypes, 3 AM deadline code, unreviewed Stack Overflow pastes. Elite open-source projects like Linux and PostgreSQL match or beat proprietary code quality (Coverity Scan data, 2014). But they’re a vanishing fraction. The other 517 million projects drown them out.

The best enterprise code sits behind firewalls. Stripe’s payment processing, Netflix’s recommendation engine, Spotify’s audio streaming. None of it is in the training data.

When AI generates code, it reproduces the most probable pattern. RLHF shifts the output, but the training distribution anchors what “probable” means. Across 518 million projects, that’s mediocre code.

AI didn’t create our quality crisis. It held up a mirror.

The Training Data Nobody Audited

In January 2025, researchers published Cracks in The Stack, analyzing The Stack v2, a primary training dataset for code models. Bugs, security vulnerabilities, and license violations that propagate directly into generated code. Standard curation methods proved ineffective at removing them.

The fixes existed. They were committed to the same repositories. They just weren’t applied to the training data. StarCoder-family models were trained on known-broken code when the fixed version sat in the same commit history. Other models use proprietary datasets with unknown curation, but the underlying source material is largely the same public code.

StarCoder’s own documentation states that generated code “can be inefficient, contain bugs or exploits.” The entire industry ships tools it knows produce broken code and buries the admission in a readme.

The Feedback Loop That Should Terrify You

AI-generated code is entering the codebases that future models will learn from. Copilot generates 46% of code for its users. GitHub excludes enterprise users’ code from training, but free-tier code is eligible, and Copilot isn’t the only path. AI-generated code lands in Stack Overflow, blog posts, open-source repos, and every corpus that feeds the next training run.

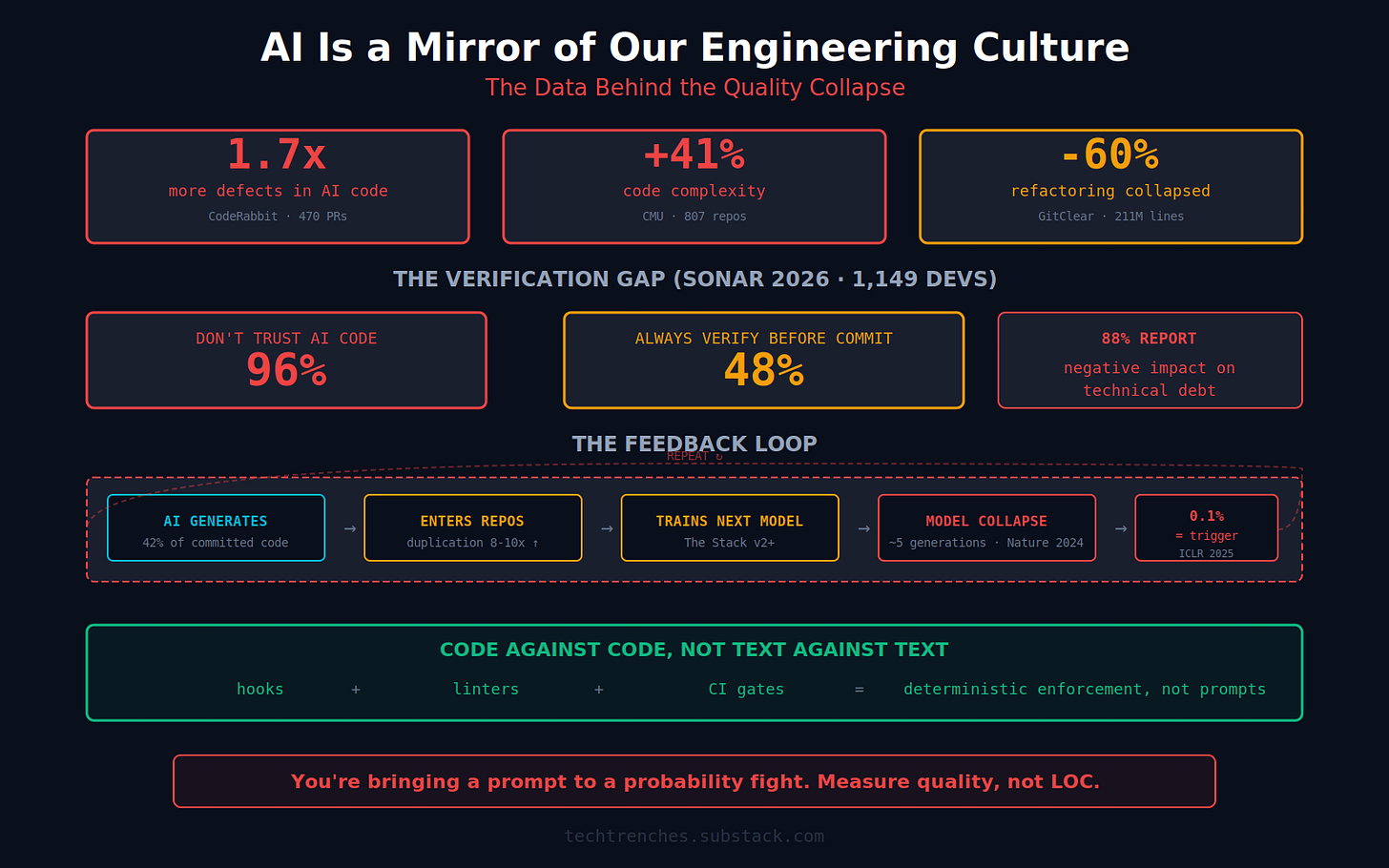

Shumailov et al. proved in Nature (July 2024) that models trained on recursively generated data collapse. An ICLR 2025 paper showed that even 0.1% synthetic data triggers it. Both studies focused on text and image models. Code has compilers and test suites, so the collapse may play out differently.

GitClear’s 2025 report (211 million changed lines from its customer base, 2020-2024) measured the degradation in practice. Refactoring collapsed from 25% to under 10%. Copy-paste surged from 8.3% to 12.3%. Code duplication increased roughly eightfold. For the first time, developers were pasting code more often than refactoring it.

An estimated 42% of committed code is now AI-assisted (up from 6% in 2023). Not every model trains on the same data. But they all train on the internet, and the internet is filling up with AI-generated code. It’s a centrifuge for technical debt.

Some companies see this as a problem. Others see it as a feature.

Spotify’s Engineers Haven’t Written Code Since December

During Spotify’s Q4 2025 earnings call on February 10, 2026, co-CEO Gustav Söderström said: “Our most experienced developers have not written a single line of code since December.”

They’re using an internal system called Honk, built on Claude Code, that lets engineers deploy features through Slack on their phones. An engineer on their commute tells Claude to fix a bug and merges to production before arriving at the office.

Spotify shipped 50+ features in 2025. When the engineer merging to production hasn’t read the code they’re deploying, what exactly is their role?

Spotify isn’t publishing quality metrics. Researchers are.

Speed at the Cost of Quality: The Data

Carnegie Mellon researchers tracked 807 open-source repositories that adopted Cursor between January 2024 and March 2025, comparing them against 1,380 matched controls. Enterprise codebases may behave differently.

Month one: velocity spiked 3 to 5x. Exactly the numbers that look spectacular on an earnings call.

Static analysis warnings increased ~30%. Code complexity rose ~41%. The velocity gains faded. The quality degradation persisted.

You borrow speed from tomorrow, and most teams never calculate the interest. During the study window, Cursor released agent mode and Claude 3.7 Sonnet launched. If model improvements were going to reverse the quality degradation, it would have shown up. It didn’t.

The Illusion of Correctness

GitClear identified something every engineering manager has witnessed: “the illusion of correctness.” AI-generated code looks clean: consistent naming, well-formatted, modern patterns. The neatness creates false confidence.

Short-term bug frequency dropped 19%. Over six months, it rose 12%. The bugs don’t disappear. They hide. They surface after the feature has shipped and everyone’s moved on.

CodeRabbit’s analysis of 470 GitHub PRs confirmed it: AI-generated code contained 1.7x more defects. Logic errors 75% more common. Security issues up to 2.74x higher. (CodeRabbit sells AI code review tools, so same caveat as Sonar applies.)

The Sonar 2026 survey (1,149 developers) crystallized the paradox. 96% don’t fully trust AI-generated code. Yet only 48% always check it before committing. 88% reported negative impacts on technical debt. The top complaint at 53%: code that looked correct but wasn’t reliable. (Sonar sells code quality tools, so take the framing accordingly. But the numbers align with GitClear, CMU, and CodeRabbit.)

Code that looks correct but isn’t, reviewed by engineers who don’t trust it but don’t check it either.

The Vampiric Effect

Steve Yegge spent a decade at Amazon and another at Google. In an interview with The Pragmatic Engineer, he called AI’s effect on engineers “vampiric.” Expect three productive hours per day. It gets you excited, you work hard, you capture value. Then you crash.

This tracks with what I observe at NineTwoThree. The engineers who get the most out of AI use it for two to three hours of intense, specification-driven work and spend the rest reviewing, thinking, and architecting. The ones who try full-day AI velocity burn out within weeks.

Degraded training data, velocity that fades while complexity stays, engineers too exhausted to catch what AI gets wrong. None of this started with AI.

What the Mirror Actually Shows

The quality crisis didn’t start with AI. I wrote about this in Software Quality Collapse. We normalized catastrophe long before the first line of AI-generated code was committed. Then we fed it into training data. Even the companies building the AI tools have the same problem: Claude Code’s source leaked and showed that the tool writing our code was built by the same engineering culture that produced the training data.

Vague specs, declining refactoring, velocity-as-productivity. AI just made it impossible to compensate with tribal knowledge. Senior engineers used to “just know” the right answer. AI can’t do that. It reproduces ambiguity faithfully and at scale.

But the part that keeps me up at night is the junior pipeline. I run hiring at NineTwoThree. I wrote about the comprehension collapse I’m seeing in candidates. It’s getting worse, not better. The tasks we used to give juniors, like the 4 AM production crash that taught me to never ship on a Friday, don’t exist as a learning mechanism if Claude fixed it at 8 PM while the engineer was on the bus. We’re eliminating the pipeline that produces the people who are supposed to review AI output. In five years, who’s left?

I’ve supervised thousands of AI coding sessions across my teams. The pattern is always the same: the model produces what you accept. If you accept a 3,167-line function, you get more 3,167-line functions. If your pre-commit hook rejects anything over 50 lines of cyclomatic complexity, you get clean code. The model doesn’t care. It adapts to whatever passes review.

What Actually Works

AI works when humans around it have strong engineering judgment. Without it, AI scales your worst habits.

I wrote an entire article about CLAUDE.md not working, blaming the models. Then I dug deeper and realized I was wrong about who to blame. The model isn’t choosing to ignore my rules. It’s doing statistics. My claude.md is one signal. The training data contains millions of examples where developers wrote as any, skipped tests, copy-pasted. For the model, my clean architecture is the outlier. The slop is the baseline.

That’s why prompts can’t fix this. Text competing against training data is a losing strategy. You’re bringing a prompt to a probability fight. The only thing that works is code against code: hooks that reject violations before they reach your branch, linters that catch as any before a human sees it, CI gates that fail the build.

The only thing that should bother you is quality, not LOC.

The Uncomfortable Truth

Companies bragging about engineers not writing code are making a bet, whether they know it or not. The bet: AI output doesn’t need human review if the metrics look good.

The snowball didn’t start with AI. It started with the first developer who shipped as any to make a deadline and the first manager who called it velocity.

Running an engineering shop that insists on code review, spec-first development, and deterministic enforcement feels like swimming upstream in a mountain river. Every earnings call screams 10x. The data in this article doesn’t.

The 10x is not real. The data is real. In two years, someone will have to debug a feature that was merged from a phone on a bus. Either there’s a human who read that code, or there isn’t.

I know which shop I’m running.

article about mediocre AI code from an mediocre chatbot, we've come full circle

OMG I can’t love this article more (retired software architect / bioinformatician)