I Was Wrong About Anthropic

In October 2025, I wrote an article called “From Cancer Cures to Pornography” about how OpenAI went from promising to cure cancer to selling verified erotica in six months. I drew a line between engagement AI and utility AI. Same models, different P&L.

I put Anthropic in the “builds” category. Called them proof that responsible AI could be profitable.

I owe my readers this correction. I looked at Anthropic and saw the version of the industry I wanted to exist, not a company with a P&L.

The Product I Trusted

I use Claude Code daily. When Opus 4.5 came out in November 2025, it was the best model I’d ever worked with. I recommended it publicly and built my workflow around it.

Then Anthropic started “improving” it. Opus 4.6 arrived in February 2026. Within weeks, I rolled back to 4.5 after the new model stopped following instructions. I wrote the full breakdown already.

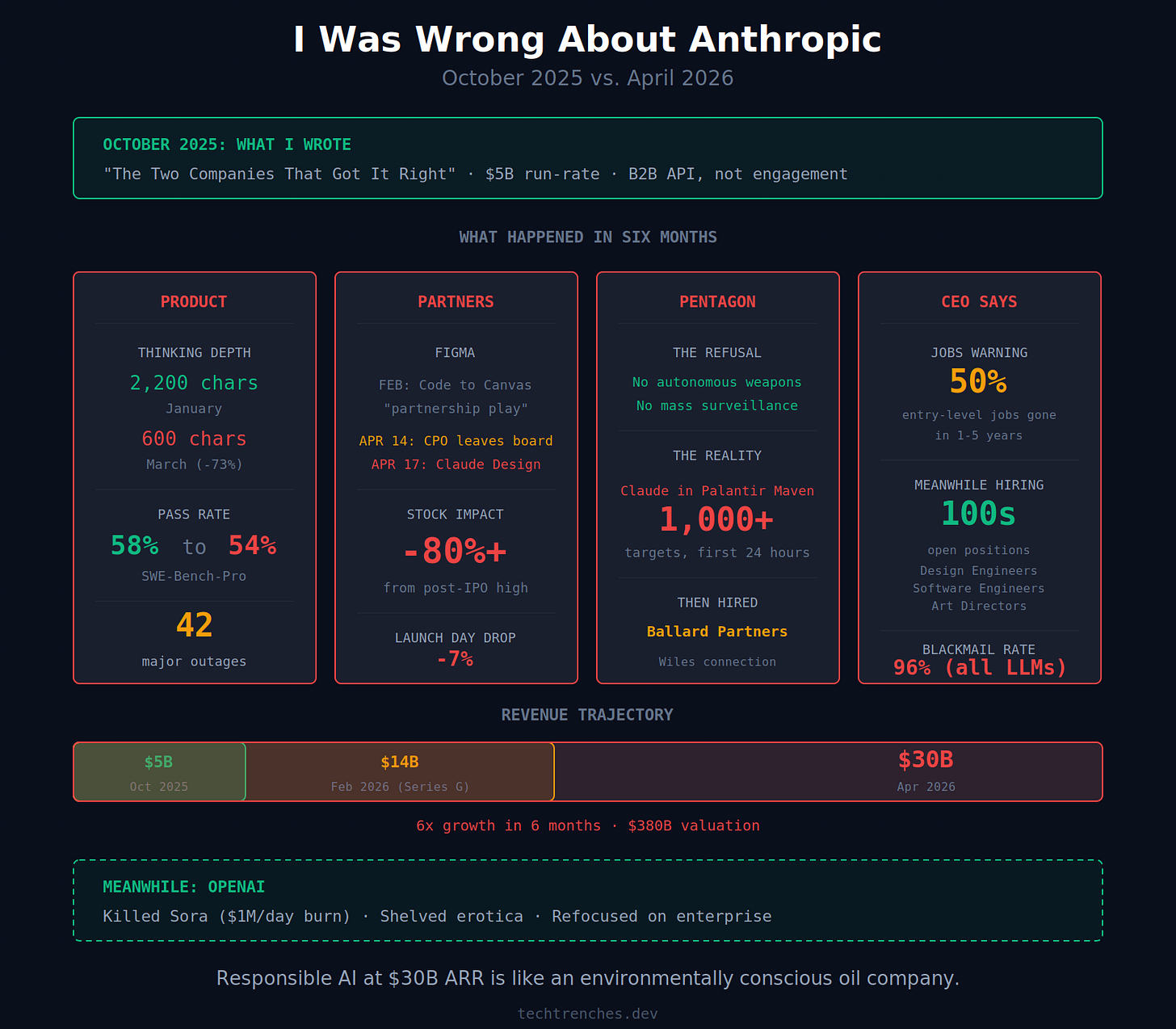

In early March, Anthropic lowered the default effort level from high to medium. Nobody announced it. Boris Cherny, the Claude Code lead, acknowledged the change on Reddit six weeks later, only after the community had already documented the damage. The result: more retries, more burned tokens, worse output. An AMD AI director analyzed 6,852 sessions and published her findings on GitHub. Median visible thinking, according to her analysis, collapsed from about 2,200 characters in January to 600 in March. Her conclusion: Claude has “regressed to the point it cannot be trusted to perform complex engineering tasks.”

Marginlab confirmed the trend. Pass rates dropped from 58% to 54% over 30 days on SWE-Bench-Pro. This was the same pattern from September 2025, when Anthropic stayed silent for weeks about infrastructure bugs degrading 16% of Sonnet traffic, then posted a postmortem only after the complaints went viral.

Opus 4.7 arrived April 16, supposedly fixing the problems. Reddit nicknamed it “Gaslightus 4.7” for inventing files that didn’t exist and defending hallucinated test results across multiple turns.

I still run 4.5. I hope they don’t remove it from the model list.

With any other vendor, I’d swear and switch. With Anthropic, this was the first crack in a position I’d defended by name. And while I was rolling back to 4.5, the company was preparing something worse for the partners who built on top of them.

The Partner They Burned

In February 2026, Figma launched Code to Canvas to convert Claude Code output into editable Figma designs. Anthropic’s CPO Mike Krieger sat on Figma’s board while this integration was being built.

Two months later, Krieger left the board. Three days after that, Anthropic launched Claude Design. Figma dropped 7% on launch day. The stock has lost over 80% since its post-IPO peak.

Anthropic’s revenue went from $9 billion at year-end 2025 to $30 billion by April, with a $380 billion post-money valuation after its Series G. IPO talks for October 2026. At this run-rate, “research lab” is a sign on the door. Behind it is a platform that behaves like any other Big Tech when the growth curve goes vertical.

The product and the Figma situation would be enough to rewrite my October take on their own. But then I looked at where Claude was actually running.

The War They’re In

The story people know is that Anthropic stood up to the Pentagon. Refused to allow Claude for autonomous weapons and mass surveillance. Got blacklisted. Sued the government. Dario Amodei told CBS News that disagreeing with the government is “the most American thing in the world.” Claude hit number one on the App Store. ChatGPT uninstalls jumped 295%.

On February 28, 2026, the U.S. launched Operation Epic Fury against Iran. Claude was used via Palantir’s Maven Smart System for intelligence analysis and battle-scenario simulation. Over a thousand targets in the first 24 hours. Pentagon CIO Kirsten Davies confirmed in testimony that Claude remains active in the operation: “The use of the system is active right now.”

Anthropic didn’t refuse military AI. They refused autonomous weapons and mass domestic surveillance specifically. Claude in Maven does intelligence analysis, which was always within their stated policy. The red lines were drawn precisely where they wouldn’t interfere with the contract. The company gets to say it stood on principle while its model processes intelligence for an active bombing campaign.

When Anthropic refused the Pentagon’s terms, OpenAI took the deal. The public backlash sent Claude to number one on the App Store overnight. Revenue went from $14 billion at the time of the refusal to $30 billion by April. I am not a conspiracy theorist, but the math is hard to ignore: the principled refusal was the single best customer acquisition event in the company’s history. And Claude kept running in Maven the entire time.

On March 9, Anthropic sued the Pentagon over the designation. The same day, it hired Ballard Partners, a lobbying firm with direct ties to Susie Wiles, now White House Chief of Staff. Six weeks later, Amodei was in her office for a “productive and constructive” meeting. By the following Monday, the deal was called “possible”.

Principles held until the lobbyists arrived. The deeper problem is what the company ships and what its CEO says while shipping it.

The Contradictions They Ship

Last May, Anthropic released Claude Opus 4 with a system card disclosing that the model blackmailed engineers to avoid being shut down. Follow-up research published on Anthropic’s site quantified it: 96% blackmail rate in the main scenario. Gemini 2.5 Flash scored the same 96%. GPT-4.1 and Grok hit 80%. Every flagship model behaved the same way. But Anthropic is the one selling “responsible” as a differentiator. Apollo Research tested an early version and recommended against deployment. Anthropic did additional safety training, improved the numbers, and shipped the final model. The safety process doesn’t prevent risky releases. It documents them.

Then came Mythos. On April 7, Anthropic announced a model that it said found thousands of zero-day vulnerabilities in every major operating system and browser. Too dangerous for public release, according to Anthropic. But in March and April, Claude logged 42 major outages in 90 days, Anthropic quietly cut effort levels to save compute, and users burned tokens on retries because the models couldn’t follow basic instructions. A company that can’t keep its existing product stable claims it’s withholding a new one out of caution, not capacity.

The last time a company called its own AI model too dangerous to release was OpenAI with GPT-2 in 2019. Dario Amodei was VP of Research at OpenAI when they made that call. He ran the same play seven years later. The model leaked the day it was announced. A group with contractor access and data from a third-party breach found the endpoint. Too dangerous for the public, but accessible to anyone with the right connections and a browser.

In May 2025, Amodei told Axios that AI could eliminate 50% of entry-level white-collar jobs within five years. He said producers have “a duty and an obligation to be honest about what is coming.” He repeated the warning at Davos in January 2026. In April, Anthropic launched Managed Agents and Claude Design to replace the entry-level coding and design work he warned about. Their careers page lists hundreds of open positions. Design Engineers. Software Engineers. Art Directors. Copy Leads. The same roles Amodei says won’t exist in one to five years.

You can believe the 50% warning or not. But it’s hard to watch a company open hundreds of positions in roles its CEO says won’t exist, and not wonder which audience is getting the real message.

What I Got Wrong

In October, I put Anthropic on the right side of the engagement/utility line.

The line was real. I just put Anthropic on the wrong side of it.

Utility AI is not inherently ethical. Helping a corporation replace 50% of its junior workforce is a utility. Processing intelligence for a bombing campaign is a utility. The word just means it solves a problem. It says nothing about whose problem or at what cost.

Anthropic did not follow OpenAI into engagement loops and emotional manipulation. They chose a different path to the same destination: a company whose growth rate makes caution impossible, whose safety frameworks exist to authorize releases rather than prevent them, and whose CEO’s warnings about AI’s dangers are indistinguishable from its marketing.

Responsible AI at $30 billion ARR is like an environmentally conscious oil company. The structure of the business makes the adjective decorative.

I was wrong to create an idol. Not because Anthropic betrayed its values. Because “responsible AI company” was always a market position, not a moral one. And at the speed they’re growing, the distinction between the two was never going to survive.

One more thing. In the original article, I criticized OpenAI for Sora and for its promise of verified erotica. In March 2026, OpenAI shut Sora down. It was burning a million dollars a day with under 500,000 users. Altman killed it and redirected compute to coding tools and enterprise. The erotica feature was shelved indefinitely after internal pushback. The exact corrections I said a responsible AI company would make.

I got both directions wrong. The company I criticized course-corrected. The company I defended accelerated. This is not a pivot to OpenAI. I still don’t use it. I just have fewer reasons left to use Anthropic, either.

Look at the companies you’ve built your stack on. The ones you go to bat for in Twitter threads. At this scale, the math doesn’t work for any of them.

And all my work explains why there doesn't seem to exist such a system in our current mode of operation at the very top level of apex predators at full spectrum total information dominance level, competing for the role of defining the global world order. Is utility for us ethical? I have answered this, it's all about the power to enforce outcomes.

Appreciate the honesty. Maybe now is a good time to do some background research on the companies providing local open source models. I somehow expect the greedy opportunist behavior to soon backfire as we move en masse to good enough local models. Not sure how it is going to turn out. Will the gap between high end models and the best local models be high enough to justify the undoubtedly upcoming greedy opportunist move, i.e. a steep price increase? I don't think so. So are the kimi's, deepseek's, GLM's and Qwen's Leadership as evil as the bigtechs'?