The Human Cost of 10x: How AI Is Physically Breaking Senior Engineers

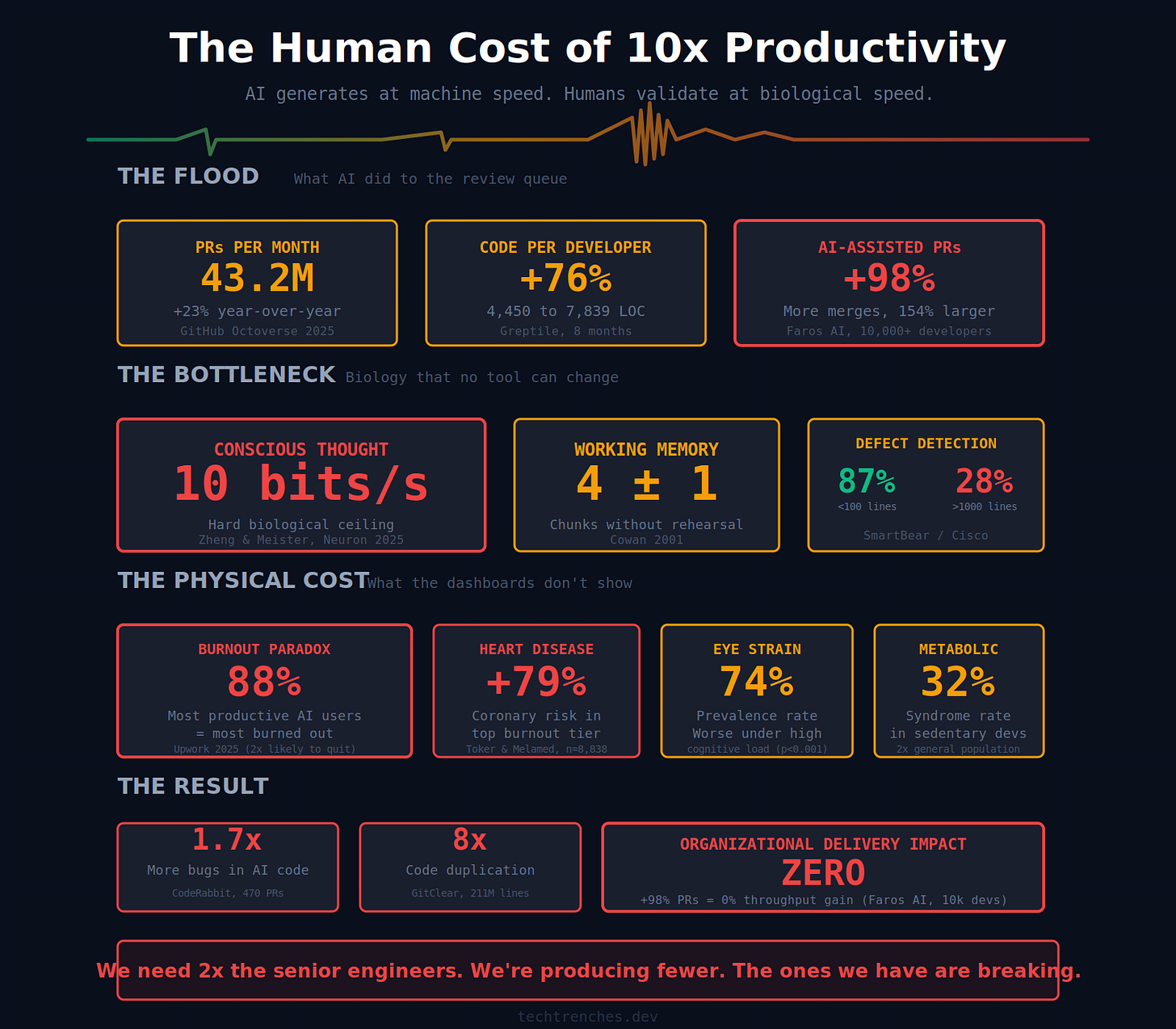

Your brain processes 10 bits per second. AI just increased your review queue by 98%. The math doesn’t work.

Last Tuesday, I stood up from my desk at 7 PM and felt a vacuum in the front of my skull. Not a headache. Not fatigue. A physical emptiness, like the frontal lobe had been running at redline all day and finally shut down. I stood there for ten seconds trying to remember what I was going to do next. Nothing came.

In the past year, the volume of information passing through my brain on any given Tuesday has become what used to take a week. Code review is the worst of it, but the real killer is the context switches. AI-generated PRs, client architecture decisions, three Slack threads about deployment issues, a candidate’s CV that needs review, an air defense alarm outside the window, then back to reviewing code that a machine wrote in seconds and I need hours to validate. Each of these demands a different mental model. Each one burns working memory. By 4 PM I’m making decisions I wouldn’t trust from a junior. By 7 PM my brain is physically empty.

The industry calls this “10x productivity.” I call it what it is: a system that generates output at machine speed and forces humans to process it at biological speed.

Workload Creep

In February 2026, UC Berkeley researchers published findings from eight months embedded inside a 200-person tech company. Over 40 in-depth interviews. Their conclusion: AI doesn’t reduce work. It intensifies it.

They found three mechanisms of “workload creep.” Task expansion: everyone’s scope inflates because AI makes it possible to do more. Blurred boundaries: AI prompting happens during lunch, commute, evenings. Implicit pressure: when colleagues visibly do more with AI, expectations rise for everyone.

The Upwork Research Institute quantified it: 77% of employees using AI say it has added to their workload. Not reduced. Added. 71% report burnout.

The finding that keeps me up at night: workers who report the highest AI productivity gains are the most burned out. 88% burnout rate among the “most productive” AI users. They’re twice as likely to quit.

The people who look best on your dashboard are the ones closest to walking out the door.

Your Brain Runs at 10 Bits Per Second

In 2025, Zheng and Meister published in Neuron that the human brain processes conscious, analytical thought at approximately 10 bits per second. Your sensory systems gather data at roughly 1 billion bits per second. But the bottleneck for code review, the part where you actually think, is 10 bits per second.

Working memory holds roughly 4 chunks of information at a time. The SmartBear/Cisco study established numbers everyone ignores: defect detection drops from 87% for PRs under 100 lines to 28% for PRs over 1,000 lines. Quality collapses after 60 minutes.

Now look at what AI did to the review queue.

GitHub’s Octoverse 2025 shows 43.2 million pull requests merged per month. Up 23% year-over-year. Lines of code per developer grew from 4,450 to 7,839 in eight months. A 76% increase.

Faros AI analyzed 10,000+ developers and found AI users merge 98% more pull requests with AI assistance. Every single one lands on a senior engineer’s desk.

As MIT reported: juniors produce far more code with AI tools, but the sheer volume is saturating senior developers’ capacity to review. One OCaml maintainer rejected a 13,000-line AI-generated PR outright. Nobody had the bandwidth.

I wrote about the supervision tax recently. The METR data showed experienced developers actually got slower with AI tools while feeling faster. The gap between perception and reality is the most dangerous finding in any of this. You can’t fix what you can’t feel.

Why Expertise Makes It Worse

In 1983, Lisanne Bainbridge published “Ironies of Automation” in Automatica. Her core finding: the more sophisticated an automated system becomes, the more demanding the human role within it. What remains after automation is the most ambiguous, most complex, least supported work.

Microsoft Research confirmed this for generative AI in 2024: AI systems can make hard tasks even harder, leaving users with the same or increased cognitive load.

The mechanism is asymmetric. When I write code, I externalize a mental model that already exists. The thinking is done before the typing starts. When I review AI-generated code, I have to reverse-engineer somebody else’s reasoning out of an artifact produced by a system that has no idea what our business does. Fundamentally harder.

A Clutch survey of 800 software professionals found 59% of developers use AI-generated code they don’t fully understand. But seniors can’t afford that luxury. Their job is to catch what looks right but isn’t.

The Qodo report confirmed the cost distribution: senior engineers report the lowest confidence in shipping AI-generated code at 22%. Context pain increases with experience: 41% among juniors versus 52% among seniors. As I covered in cognitive offloading, most workers using AI skip critical thinking entirely. Seniors who do think critically, which is their entire job, absorb the cognitive cost everyone else offloads.

The Body Keeps Score

The cognitive damage is only half of it. The body takes the rest.

Computer Vision Syndrome affects 74% of screen users during periods of increased screen time, and digital eye strain severity gets significantly worse when cognitive load goes up. AI-intensified code review doesn’t just mean more screen hours. It makes each hour more physically damaging.

A 2024 meta-analysis covering 26,916 participants found burnout increases cardiovascular disease risk by 21%. Those in the upper burnout quintile had a 79% higher risk of coronary heart disease. The largest IT study found metabolic syndrome prevalence of 32% among long-term sedentary programmers. Double the general population.

Then sleep. Work-related rumination mediates the link between work stress and reduced sleep quality. When I close my laptop, my brain doesn’t stop. It replays the PR I didn’t finish. The dependency I flagged but couldn’t trace.

More code review during the day, worse sleep at night, worse decisions the next morning, more rubber-stamped PRs, more bugs in production, more stress. Repeat until something breaks. Usually the human.

The Dashboard Lies

GitClear analyzed 211 million changed lines. Duplicated code blocks increased eightfold. Code churn rose from 5.5% to 7.9%. AI-generated code averages 1.7x more bugs per PR than human-written code. Logic defects up 75%. Performance issues 8x more frequent.

Faros AI’s conclusion after analyzing 10,000+ developers: despite merging 98% more pull requests with AI, company-wide delivery showed no measurable organizational impact on throughput or quality.

Sonar’s CEO identified the hidden danger: AI models are getting better at avoiding obvious bugs and security holes, but structural flaws now constitute more than 90% of issues. You’re being lulled into a false sense of security. The easy problems get solved. The hard problems get hidden beneath clean-looking code that passes every automated check. And the people who can find them are buried under a volume of output that exceeds human cognitive bandwidth by design.

More code. More bugs. More review burden. Same output. Worse humans.

The Math Doesn’t Work

Here’s what nobody is doing the arithmetic on. AI just grew the demand for senior engineering judgment by 76 to 98%. Every AI-generated PR needs a human who can catch what the machine got wrong, spot the structural flaw on line 847, trace a logic error three services downstream. The supply of those humans didn’t move. And as I’ve covered in the talent crisis and comprehension extinction, the pipeline that produces them is being hollowed out by the same tools creating the demand.

But here’s where the senior engineer actually lives in 2026. Industry layoffs on one side, hundreds of thousands of engineers cut since 2022, the next round always one earnings call away. 10x productivity expectations on the other, set by people who have never reviewed an AI-generated PR in their lives. In the middle, somebody exhausted and burned out, with a choice to make every morning: trust the AI output, because it worked the last twenty times, didn’t it, or keep validating every line until the body gives out.

How long can the average human hold that line?

And the worst part: validating or trusting, the engineer owns the outcome either way. When production goes down at 3 AM, it’s your name on the commit. Your PR that got merged. Your incident report. There is no version of this choice where you’re not on the hook.

It’s a rhetorical question. We already know the answer. The data in this article is the answer.

If you’re a senior engineer feeling this in your body, you’re not alone and you’re not weak. The eye strain. The sleep that doesn’t restore. The vacuum in your head at the end of the day. You’re doing a job that didn’t exist eighteen months ago, with cognitive equipment that hasn’t changed in 200,000 years. Reply to this email and tell me what it feels like for you. I’m collecting data for a follow-up.

Subscribe for weekly insights from the trenches of engineering leadership. No theory, just practical systems that work.

My current client is a small company so, although I'm in a senior role, I only have to deal with PRs from a few juniors. I feel the burn more on my end, since I wear a lot of hats on such a small team and (under protest) have worked in claude code in hopes of improving throughput (futile hope, I argue). So probably 50% of the LLM code I review in this ongoing experiment, I prompted.

I also use it on pet/personal projects where the stakes are low. At worst, something only I use does something unfortunate. This is a fine use case for LLMs I think, but because I have been at this 20+ years I naturally check and recheck every line anyway. And that's where it gets weird. That's where I stare into the void.

It is very difficult to model the "mind" of a stochastic text generator. With other (human) programmers, I can have conversations with them, get a feel for their strengths and weaknesses, know when to second guess something that looks odd in their code and when to assume that, if the logic checks out, they had a reason for doing it the way they did.

Not so, at all, with chatbots. They will speak with absolute confidence in great depth and detail on any subject or specialty, then fuck up the most basic things, then spit out some fully functional if inelegant code, then asked for a small change, rewrite an unrelated half of their own code wrongly.

This is what fries my brain. With them it's contexts within contexts all the way down, all of them changing constantly according to some deranged rube goldbergian clockwork of tensors and matrices. Our brains are not made to deal with this fractal insanity. Huge portions of our psyche are built around dealing with other people, or people-like things, that exist within some boundary of predictability that can be discovered with observation and familiarity.

We leverage this subconsciously whenever we talk to our pets or plants, or see gods and spirits at work in the impersonal forces of nature, or wonder why our code or gadgets are misbehaving. But *especially* when something presents as human like chatbots do, this whole evolved subconscious architecture kicks in automatically.

And then the bot breaks it. Over and over. And we get exhausted, as we would in a bad relationship with a person with serious issues. Because no matter how much we tell ourselves logically, consciously, that the bot isn't human and can't be anticipated like one, that's only a single tiny input to the much larger true neural network inside our heads that begs to differ. And yet finds itself confused and disappointed moment to moment with the digital demon with whom we're trying to communicate.

Good article, Denis and hope you are well and safe.

In my opinion generated code is by itself worthless. It can easily be created at will in any desired amount.

The actual “value” comes from someone accurately and precisely expressing intent (which is best done in a formal language with unambiguous interpretation, we used to call this programming) of how a machine should behave and the intent being in itself correct in the sense of properly and reliably solving whatever task/problem that led the programmer to write the code in the first place.

This is why using ai to generate large amounts of code and then attempting to critically review it seems asinine to me. The AI can not read your mind and figure out what your intent is. You have to express it, and if you can properly do so then translating that to code was always trivial.

Sorry for the rambling.